一、背景

k8s运维比较难的点在于当worker节点资源使用率突然爆满(常规是内存使用率100%)时引发节点NotReady状态。这对于该节点上的pod来说就是一场灾难。很多人说那我做好pod的containers的资源限制不就行了吗,这么做可行但是肯定是不够了。除非考虑给予request和limit资源大小一致(这种使用场景不考虑)。官方对此有解决方法,通过初始化kubelet服务。参考文档:https://kubernetes.io/zh/docs/tasks/administer-cluster/out-of-resource/

二、节点资源上限引发pod驱逐

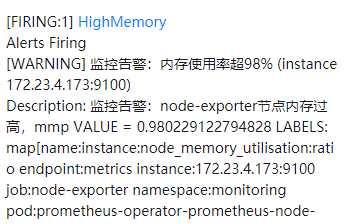

2.1EKS集群告警信息

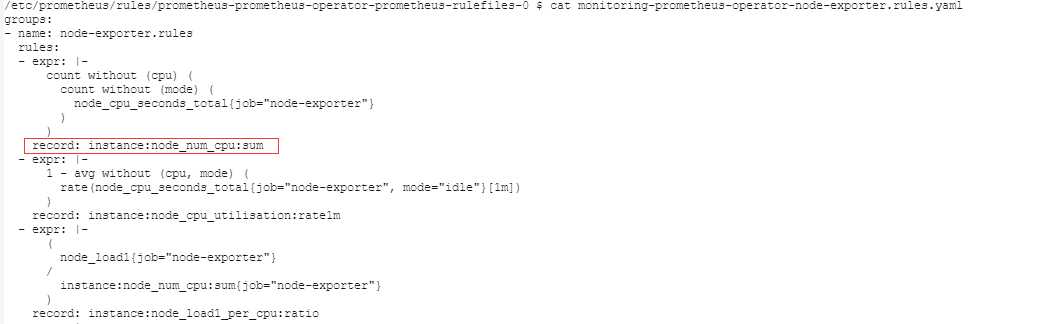

备注:可以看到有个节点资源使用率达到上限值,这里要说下prometheus-operator默认对节点内存仅做了record记录告警,参考文档:https://prometheus.io/docs/prometheus/latest/configuration/recording_rules/

示例:

备注:pod默认一般情况下的状态只有Pending,Running,Succeeded,Failed,Unknown,出现Evicted则表示被kubelet驱逐。READY 0/1表示容器释放,这样的话其生成的底层cgroups资源限制也会相应的释放掉,从而缓解节点资源压力。

2.3手动清除无效pod

[k8s-prod-de@ip-172-20-21-242 xm]$ kubectl delete pods brokersview-frontend-api-87798c798-v5nmd -n project-brokersview

pod "brokersview-frontend-api-87798c798-v5nmd" deleted

三、kubelet配置硬驱逐

k8s官方给出两种驱逐方式,分别是软驱逐和硬驱逐。我在查询相关资料时发现一个集群用硬驱逐的方式较大,而以上情形触发kubelet进行驱逐的话也用的是硬驱逐memory.available<200Mi方式。它有六种驱逐策略分别是内存,磁盘(包含4类驱逐策略),pid方式。官方文档:https://kubernetes.io/zh/docs/tasks/administer-cluster/out-of-resource/

四、节点预先配置驱逐

因为我用的是aws的EKS集群,其内置了硬驱逐模式,而目前所引发的故障官方预先配置好的策略似乎也够用,如果不够用的话可能需要针对性的调整。而对于aws的EKS而言官方也给出了两种方式去配置驱逐。

4.1eksclt定义驱逐策略

参考文档:https://eksctl.io/usage/customizing-the-kubelet/#customizing-kubelet-configuration_1

以下示例配置文件创建了一个节点组,该节点组为kubelet保留了300mvCPU,300Mi内存和1Gi临时存储。300mvCPU,用于操作系统系统守护程序300Mi的内存和1Gi临时存储;并在200Mi可用内存少于根文件系统或根文件系统少于10%的情况下驱逐Pod 。

apiVersion: eksctl.io/v1alpha5

kind: ClusterConfig

metadata:

name: dev-cluster-1

region: eu-north-1

nodeGroups:

- name: ng-1

instanceType: m5a.xlarge

desiredCapacity: 1

kubeletExtraConfig:

kubeReserved:

cpu: "300m"

memory: "300Mi"

ephemeral-storage: "1Gi"

kubeReservedCgroup: "/kube-reserved"

systemReserved:

cpu: "300m"

memory: "300Mi"

ephemeral-storage: "1Gi"

evictionHard:

memory.available: "200Mi"

nodefs.available: "10%"

featureGates:

TaintBasedEvictions: true

RotateKubeletServerCertificate: true # has to be enabled, otherwise it will be disabled

4.2控制台创建的节点手动定义驱逐

https://github.com/awslabs/amazon-eks-ami/blob/master/files/bootstrap.sh#L354

您可以透过 –kubelet-extra-args 的参数来调整对节点初始化中 kubelet evictionHard 的大小:

范例:

# /etc/eks/bootstrap.sh k8s-prod-hk --kubelet-extra-args "--kube-reserved memory=0.5Gi,ephemeral-storage=1Gi --system-reserved memory=0.5Gi,ephemeral-storage=1Gi --eviction-hard memory.available<200Mi,nodefs.available<10%"

4.3自定义kubectl配置文件方式

[root@ip-172-20-3-32 ~]# vi /etc/kubernetes/kubelet/kubelet-config.json

#修改对应的值,一般修改以下值:

"memory.available": "100Mi",

备注:官方定义的值也挺小的,而且该配置文件中没有看到给系统及kubelet预留的资源(可以有效防止OOM,引发核心进程被kill)

五、总结

像EKS默认已经给出了驱逐策略,但是给的较低,如果服务稳定性要求非常高建议使用eksctl去定义驱逐策略。同时回顾昨天的问题看起来是引发驱逐策略,并驱逐了一个pod。如果该节点同时有多个pod出现资源使用率过高时是否kubelet还未做出有效的驱逐时节点就宕机了。这些猜想只能在以后运维的过程中去发现探索了。附EKS集群的kubelet配置文件:

[ec2-user@ip-172-23-4-188 ~]$ cat /etc/kubernetes/kubelet/kubelet-config.json

{

"kind": "KubeletConfiguration",

"apiVersion": "kubelet.config.k8s.io/v1beta1",

"address": "0.0.0.0",

"authentication": {

"anonymous": {

"enabled": false

},

"webhook": {

"cacheTTL": "2m0s",

"enabled": true

},

"x509": {

"clientCAFile": "/etc/kubernetes/pki/ca.crt"

}

},

"authorization": {

"mode": "Webhook",

"webhook": {

"cacheAuthorizedTTL": "5m0s",

"cacheUnauthorizedTTL": "30s"

}

},

"clusterDomain": "cluster.local",

"hairpinMode": "hairpin-veth",

"readOnlyPort": 0,

"cgroupDriver": "cgroupfs",

"cgroupRoot": "/",

"featureGates": {

"RotateKubeletServerCertificate": true

},

"protectKernelDefaults": true,

"serializeImagePulls": false,

"serverTLSBootstrap": true,

"tlsCipherSuites": [

"TLS_ECDHE_ECDSA_WITH_AES_128_GCM_SHA256",

"TLS_ECDHE_RSA_WITH_AES_128_GCM_SHA256",

"TLS_ECDHE_ECDSA_WITH_CHACHA20_POLY1305",

"TLS_ECDHE_RSA_WITH_AES_256_GCM_SHA384",

"TLS_ECDHE_RSA_WITH_CHACHA20_POLY1305",

"TLS_ECDHE_ECDSA_WITH_AES_256_GCM_SHA384",

"TLS_RSA_WITH_AES_256_GCM_SHA384",

"TLS_RSA_WITH_AES_128_GCM_SHA256"

],

"clusterDNS": [

"10.100.0.10"

],

"evictionHard": {

"memory.available": "100Mi",

"nodefs.available": "10%",

"nodefs.inodesFree": "5%"

},

"kubeReserved": {

"cpu": "80m",

"ephemeral-storage": "1Gi",

"memory": "893Mi"

},

"maxPods": 58

}

留言